AI Systems, Not Models

Clare Kitching, former QuantumBlack and McKinsey, now at Cambiq, named one of the Global Top 100 Innovators in Data & Analytics, posted something recently that stopped me mid-scroll:

Everyone talks about AI models. Very few talk about AI systems.

She is right. And StellarView is the proof.

The Model Trap

The industry is drunk on models. GPT-4o. Claude Opus. Gemini. Llama. Every week a new benchmark, a new parameter count, a new capability demo. Engineers spin up API calls, wrap them in a FastAPI endpoint, and call it “AI-powered.”

That is a model call. It is not a system.

A system has:

- Governance: who approved this AI interaction? What standards did it follow? Can you audit it?

- Context management: what did the AI know when it made this decision? Was it the right context?

- Orchestration: how do multiple AI calls compose into a workflow? Who manages the state?

- Verification: did the output actually work? Not “did it look right”, did it boot?

- Feedback loops: does the system learn from its failures? Does the next run benefit from the last?

Clare has been articulating this distinction for years. Her work on AI governance frameworks, practical ones, not “theatre of governance” as she calls it, maps directly to what StellarView builds.

How StellarView Is an AI System

StellarView does not wrap a model. It orchestrates a system:

Governance Layer

Every AI interaction passes through the Prime Directive, a governance framework aligned with NIST AI RMF, EU AI Act, and ISO 42001:2023. Not as a checkbox. As enforceable code in every Smart Prompt template.

The modality settings (Nobility, Gentility, Rage) are Clare’s “embedded governance” in practice. When a project runs in Nobility mode, every AI call enforces 80% test coverage, documentation requirements, and human review gates. When it runs in Rage mode, those constraints are explicitly loosened, and the audit trail records that choice.

No shadow AI. No ambiguity about what the policy is. The policy is in the template.

Context Management

The Stellar Context Engine manages what each AI call sees. Smart Prompt templates with variable substitution. Epic context from analysis missions. Galaxy-scoped knowledge from Space Lake. The AI does not hallucinate architecture decisions because it has the validated architecture in its context window.

Clare talks about organizations where “employees don’t know whether policies exist or how to apply them.” In StellarView, the policies are the context. They are injected into every interaction. You cannot not apply them.

Orchestration

Analysis Mission → Execution Mission → Forge Mission. Three mission types, sequenced, with state management between them. Miracle Mode orchestrates all three across an entire epic. Branch chaining ensures each phase builds on the previous one’s verified output.

This is not “call GPT and hope.” This is a multi-phase, state-managed, auditable pipeline.

Verification

The Forge Mission boots every phase. Genesis detects the stack. Install, start, health check. If it fails, Claude Code auto-fixes. Up to three attempts. If still broken, a GitHub issue documents exactly what went wrong.

The model call is one step. The verification is where the system earns trust.

Feedback Loops

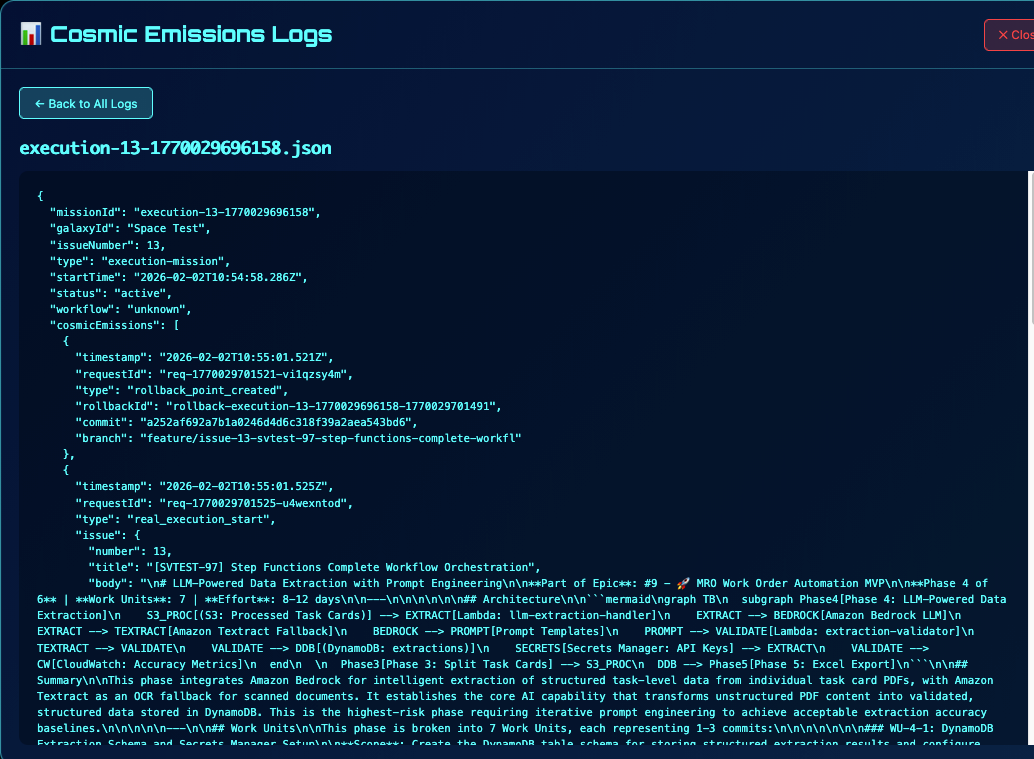

Cosmic emissions record everything. Space Lake ingests the results. The RAG Companion can answer “what went wrong in the SolarScore Phase 7 execution?” grounded in actual logs. The Skill Builder captures patterns that compound across engagements.

The system gets smarter. The model stays the same.

The Governance Clare Advocates

Clare’s most pointed insight: governance cannot be “theatre.” Policies that exist on paper but are not understood or implemented expose organizations to regulatory and reputational risk.

StellarView’s answer: governance is code.

{

"analysisDepth": "full",

"architectureValidation": "required",

"testGeneration": "required",

"documentationExpectation": "complete",

"humanCheckpoint": "required",

"scopeEnforcement": "strict"

}

That is a Nobility modality configuration. It is not a policy document. It is enforced at runtime. Every AI interaction that runs under Nobility follows these rules because the Smart Prompt template includes them. The cosmic emissions prove compliance.

When an auditor asks “did your AI follow governance standards?” the answer is not “we have a policy.” The answer is “here are 47 cosmic emissions showing every AI interaction, the standards applied, the tokens consumed, and the outputs verified.”

That is what Clare means by embedded governance.

What This Means for the Practice

Clare is describing the mature state of AI adoption. Not experimentation. Not proof-of-concepts. Scaled, governed, auditable AI systems that organizations trust enough to put in production.

StellarView is built for that state. Not because we anticipated Clare’s framing, because we needed it ourselves. When you run Miracle Mode overnight across four client galaxies, you need governance that is embedded, not optional. You need audit trails that are automatic, not manual. You need verification that is systematic, not hopeful.

The model is a component. The system is the practice.

Clare Kitching’s work: LinkedIn · Cambiq

See the system in action: From Vibe to Running App in One Day